In the days after a gunman killed five people at a gay nightclub in Colorado last month, much of social media lit up with the now familiar expressions of grief, mourning and disbelief.

But on some online message boards and platforms, the tone was celebratory. “I love waking up to great news,” wrote one user on Gab, a platform popular with far-right groups. Other users on the site called for more violence. The hate is not limited to fringe sites.

On Twitter, YouTube and Facebook, researchers and LGBTQ advocates have tracked an increase in hate speech and threats of violence directed at LGBTQ people, groups and events, with much of it directed at transgender people.

The content comes after conservative lawmakers in several states introduced dozens of anti-LGBTQ measures and amid a wave of threats targeting LGBTQ groups, as well as hospitals, health care workers, libraries and private businesses that support them.

“I don’t think people understand the state of danger that we’re living in right now,” said Jay Brown, senior vice president at the Human Rights Campaign and a transgender man. “A lot of that is happening online, and online threats are turning into threats of real violence offline.”

Hospitals in Boston, Pittsburgh, Phoenix, Washington DC, and other cities have received bomb threats and other harassing messages after misleading claims spread online about transgender care programs.

In Tennessee, masked members of a white supremacist group showed up recently at a holiday charity event at a bookstore because the evening’s entertainment included a drag performer. A party at an adults-only gay nightclub scheduled for before Christmas was also the subject of threats. The party’s theme? Ugly Christmas sweaters.

“And they’re still coming after us? It’s just straight up bigotry and hatred at this point,” said Jessica Patterson, one of the organizers of the event, who noted that groups calling for violence against LGBTQ groups often espouse other bigotries too. “They just have to hate someone.”

The transphobic content targeting events such as Patterson’s is just a subset of the hateful content about Jews, Muslims, women, Black people, Asians and others that has internet safety advocates and an increasing number of lawmakers in the United States and elsewhere pushing for tougher regulations that would force tech companies to do more.

There is no simple explanation for the increase in hate speech documented by researchers recent years. Socio-economic stress caused by the COVID-19 pandemic, increased political polarization and resurgent far-right movements have all been blamed. So have politicians such as Donald Trump, whose brash use of social media emboldened extremists online.

“I’ve been tracking hate-fueled extremist communities for more than 25 years but I’ve never seen hate speech — let alone the calls for violence that they spark — reach the volume they have now,” extremism researcher Rita Katz wrote in an email to The Associated Press.

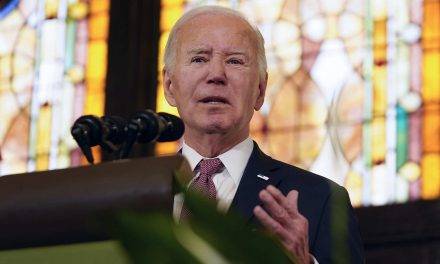

Katz is co-founder of SITE Intelligence Group, which monitors far-right internet sites and has identified dozens of threats against LGBTQ groups and events in the U.S. in recent months. SITE released a bulletin recently detailing death threats against drag performers after one appeared at the White House bill signing of the Respect for Marriage Act.

Researchers at the Center for Countering Digital Hate, a nonprofit with offices in the U.S. and United Kingdom, studied the social media messages that spread immediately after the Colorado Springs shooting in November and found many examples of far-right Trump supporters celebrating the carnage. The users who didn’t praise the shooting often claimed it was faked by authorities and the media as a way to make conservatives look bad.

Online hate speech has been linked to offline violence in the past, and many of the perpetrators of recent mass shootings were later found to be immersed in online worlds of bigotry and conspiracy theories.

Officials in a number of countries have cited social media as a key factor in extremist radicalization, and have warned that COVID restrictions and lockdowns have given extremist groups a powerful recruiting tool.

Despite rules prohibiting hate speech or violent threats, platforms such as Facebook and YouTube have struggled to identify and remove such content. In some cases, it’s because people use coded language designed to evade automated content moderation.

Then there is Twitter, which saw a surge in racist, anti-Semitic and homophobic content following its purchase by Elon Musk, a self-described free speech absolutist. Musk himself posted a tweet in December that mocked transgender pronouns, as well as another misleadingly suggesting that Yoel Roth, Twitter’s former head of trust and safety, had supported letting children into gay dating apps.

Roth, who is gay, went into hiding after receiving a deluge of threats following Musk’s tweet.

“He (Musk) didn’t use the word ‘groomer’ but that’s the subtext of his tweet is that Yoel Roth is a groomer,” said Bhaskar Chakravorti, dean of global business at the Fletcher School at Tufts University, who has created a “Musk Monitor” tracking hate speech on the site.

“If the owner of Twitter himself is pushing false and hateful content against his former head of safety, what can we expect from this platform?” Chakravorti said.